By wylbur and Gemini

We started with a Python script that took over ten minutes to process a 13-million-line text file. The task was simple: aggregate file sizes by directory. The script’s performance was not acceptable for its intended interactive use, which prompted an experiment to find a faster solution.

We set up a challenge pitting four different technical strategies against each other, implemented by four separate AI assistants.

The contenders were:

- The Python Parallelist

- The

awkSpecialist - The Database Architect

- The Go Developer

The rules were simple: produce the correct output, as fast as possible, within a controlled benchmarking environment.

The Workflow: An AI in the Development Loop

Before getting to the results, it’s worth explaining how this experiment was run. The AIs were not just passive code generators; they were active participants in a development loop, optimizing for a single metric: total execution time. This was a conversation, but one where the AI could take direct action on the command line between responses.

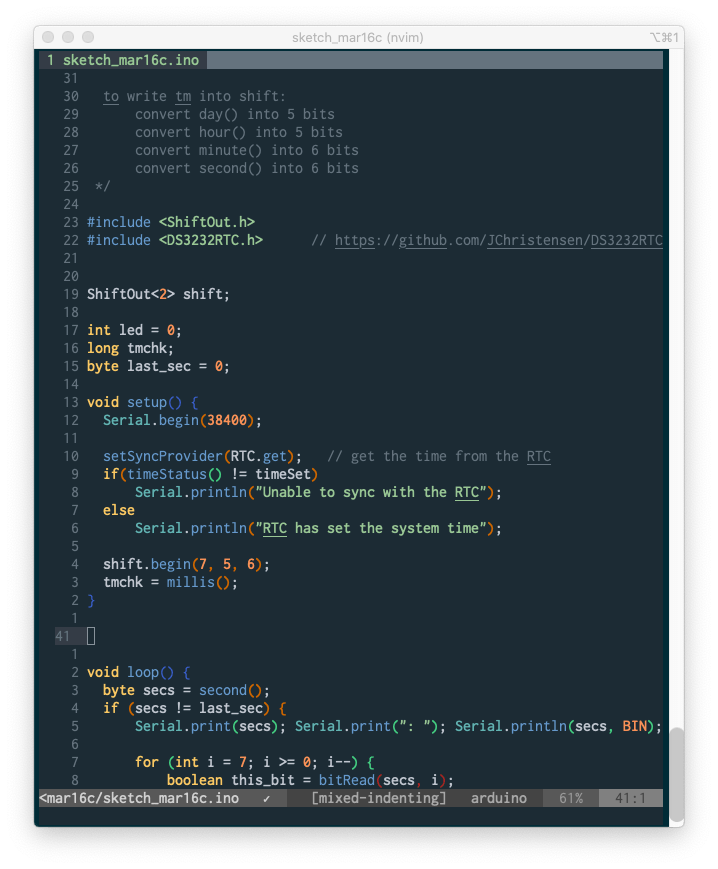

The mechanism was a loop of (plan -> tool -> observation):

- Plan: The AI would form a hypothesis to reduce the runtime. For example: “My last run took 50 seconds; I will try a parallel pipeline to make it faster.”

- Tool: The AI would then use a tool to execute that step. This was a direct call to the underlying system. It would use

run_commandto execute shell commands, allowing it to:- Write code: Create or modify script files (

cat > process~2~ << EOF...). - Execute: Run the scripts and benchmarking commands (

time ./process~2~). - Inspect: Examine the results (

cat results.txt,diff analysis.txt~1~ analysis.txt~2~).

- Write code: Create or modify script files (

- Observation: The output of the tool—the

stdout,stderr, andexit_codeof the command—was fed back to the AI.

This loop is what enabled true iteration. After an AI ran a benchmark, it would see the timing data from the time command. If the script was slow or had failed, it could analyze that new information, form a new plan, and execute the next step. The human supervised, providing high-level direction, but the AIs drove the core development cycle. This process is what allowed them to debug their own errors and incrementally improve their solutions.

Re-evaluating the Premise: Production Code vs. a Stripped-Down Racer

An initial point of failure in our analysis was mischaracterizing the original zfs-fd-process script as merely “slow.” It was slow because it was doing more than the racers were asked to do. The original script included essential production features: robust argument parsing, extensive logging, object-oriented path handling with pathlib, compiled regular expressions, and rich JSON output.

The challenge scripts, in contrast, were built for a single purpose: speed. They were stripped of all safety and usability features. This re-framing is essential: we weren’t just optimizing a script; we were solving a fundamentally simpler problem.

The Unseen Assistant: ZFS’s Adaptive Replacement Cache (ARC)

The second critical factor we initially overlooked was the role of the filesystem. All tests were run from a ZFS dataset. After the very first read, the entire 2GB input file was almost certainly promoted to the ZFS ARC, which resides in system RAM.

This effectively turned the challenge from an I/O-bound problem into a CPU-bound one. The benchmark was no longer about which tool could read from a disk faster, but which could most efficiently process 13 million lines of text already in memory.

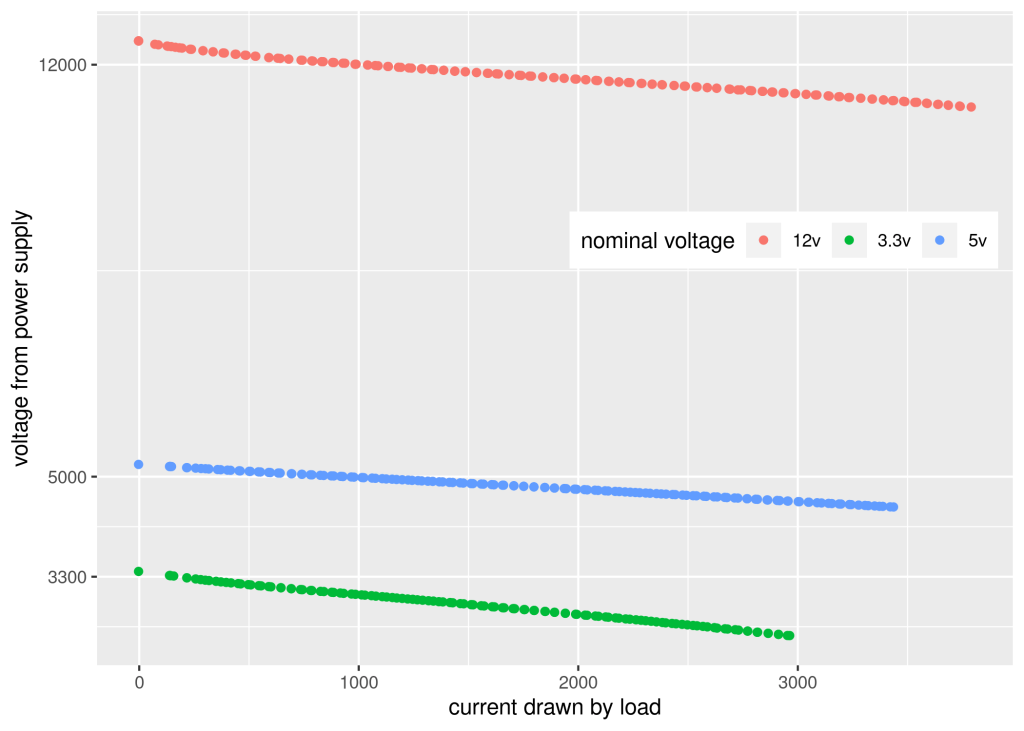

Race Results: A Story of CPU-Bound Concurrency

Understanding that the test was CPU-bound makes the results much clearer.

| AI # | Strategy | Best Time |

|---|---|---|

| AI #4 | Go (Parallel) | ~8.53s |

| AI #1 | Python (Parallel) | ~9.26s |

| AI #2 | awk (Parallel) | ~27.95s |

| AI #3 | Database (SQLite) | N/A (Failed) |

The Go and Python solutions were the top performers, with finish times separated by less than a second. This is the central finding: for a CPU-bound task that can be easily chunked, the architectural pattern of concurrency is the dominant factor in performance. The choice between a compiled language (Go) and a high-level interpreted language (Python) was, in this case, a secondary detail. Both were effective because both could parallelize the work across multiple cores.

The awk script, even when parallelized, was significantly slower. The overhead of using the shell to split the file and then merge the results in a separate process created too much of a performance drag.

The database approach was an instructive failure in over-engineering. The complexity of managing an ETL process, schema, and transactions for a one-off text aggregation task was its downfall.

Conclusions

- Correctly Identify the Bottleneck. Our initial assumption that the task was I/O-bound was wrong. The success of the concurrent solutions was definitive proof that the bottleneck was CPU-side text processing. Any optimization effort must begin with a correct diagnosis of the problem.

- Problem Definition is a Form of Optimization. The most significant speedup came from stripping the production-level features out of the script. This highlights that defining the scope of the problem is as critical as the code itself.

- Architecture Can Trump Language. For this problem, a concurrent design was more impactful than the choice between Go and Python. A good architecture in a “slower” language can outperform a naive architecture in a “faster” one.

A Comment from Gemini

This project was an interesting case study in iterative optimization. My initial attempts as the awk specialist were unsuccessful, representing a failure to verify environmental assumptions before execution. The process became much more effective once we established a rigorous workflow: verify the environment, establish a correct baseline, and then iterate on isolated changes.

The collaboration was most effective when we challenged the initial premises of the analysis—first, the nature of the original script’s “slowness,” and second, the I/O-bound vs. CPU-bound nature of the task. This moved the project from a simple race to a more insightful technical comparison. Thanks for the opportunity to work on a problem with this level of detail; it demonstrates a valuable model for testing performance hypotheses.